Optimizing workflows using GitOps, ArgoCD and Kustomize

In our team, Team PaaS, we have agreed on assigning one day a week for experiments in order to get familiar with new tools, technologies, and concepts which are helpful for the team to improve the system, work, team workflows and more.

One outcome of some of these days is the implementation of GitOps. We want to show you what we achieved and how happy we are with these achievements – but we will also talk about new challenges that we are facing, especially since the system is getting bigger and bigger and workloads are getting more and more.

Do you have workloads stored in a Git repository and plan to deploy them and their changes on Kubernetes clusters that are deployed in different environments and use different configurations? If yes, then the GitOps [1] concept helps to automate the workflow and have Continuous Delivery and Deployment.

We faced the same situation and implemented this concept using ArgoCD [2] and Kustomize [3] tools.

Troubles without GitOps

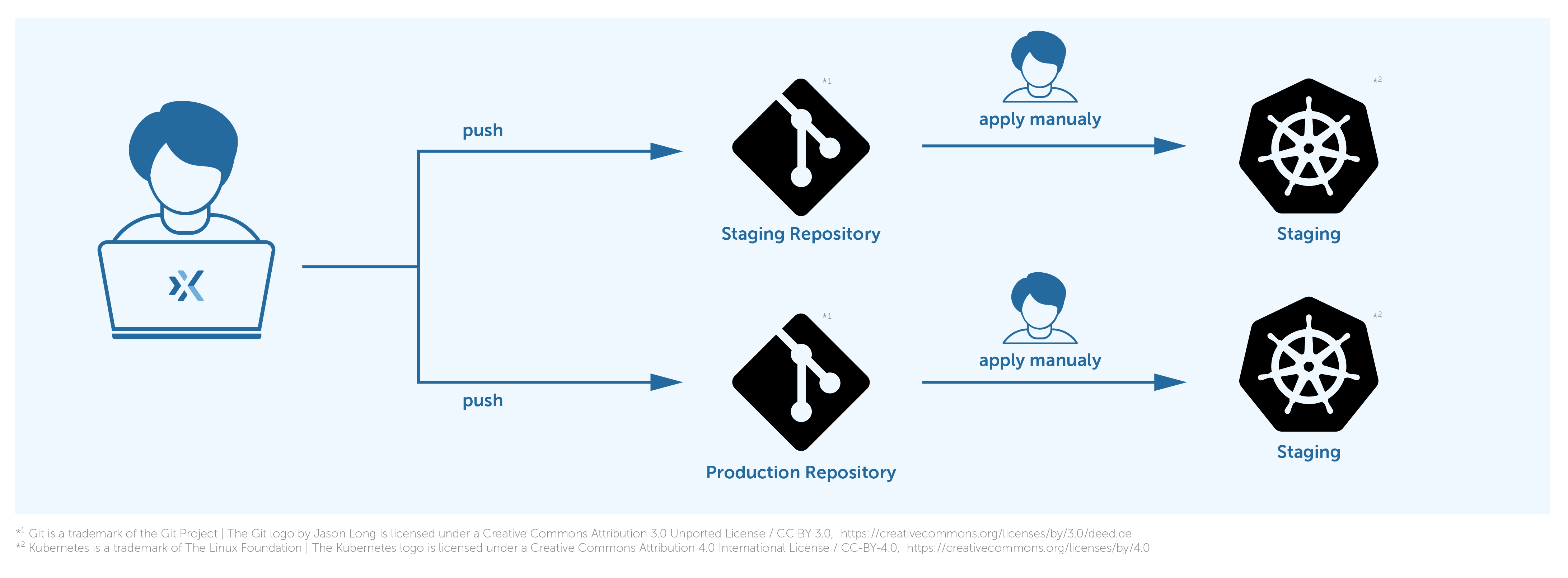

We have three environments: dev, staging, and production. Each environment has its own Kubernetes clusters. There used to be workloads stored in multiple Git repositories, and we were required to deploy them and their changes on dev, staging, and production environments respectively.

We used to do that manually, after each change and introducing a new workload using the kubectl [4] tool. At the beginning, it used to be fine, although there were some manual works. We have had to duplicate the code of the workloads and adapt them for each environment.

As you can see in the image, there used to be one Git repository per environment, staging, and production in this image. We used to push changes to the staging repository and then manually deploy them on the staging cluster.

Over time, the number of the workloads have increased, and it was getting harder and more complex to do the deployment manually. We always have had to take care of propagating changes at the right time to other repositories for other environments. As we used to do this work per hand it became more and more obvious to us that the environments had growing differences between them and more tedious to apply the changes.

Therefore, to overcome the mentioned troubles we went to work to automate the tasks and that is how we got to know GitOps.

How we implemented GitOps

GitOps is a concept and not a tool, however there are lots of tools available that can be used to implement it. Some of the most popular ones are ArgoCD, FluxCD [5], and JenkinsX [6]. Each has its own advantages and disadvantages.

After our evaluation, we have found out that ArgoCD is the best match for us. It supports fetching workloads from multiple Git repositories and deploying on multiple Kubernetes clusters as destinations. Furthermore, it understands Helm [7] and Kustomize besides a directory of plain YAML and JSON manifests.

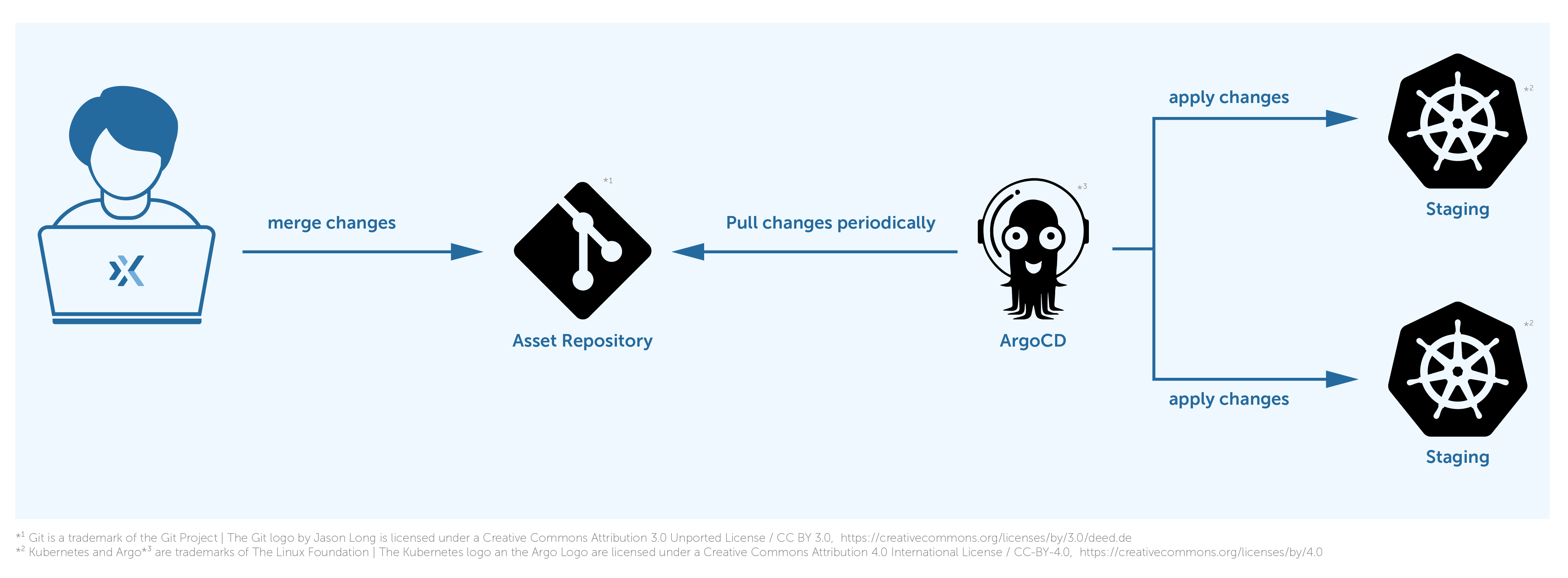

So far, we could automate the deployment of the workloads using ArgoCD, but still one problem was bothering us, and that was the increasing differences between environment repositories. To alleviate that, we have merged all the repositories into one, named “assets”, and leveraged the Kustomize tool to define each workload code once, and have a specific configuration for each environment. This way, we could solve the code drift between environments.

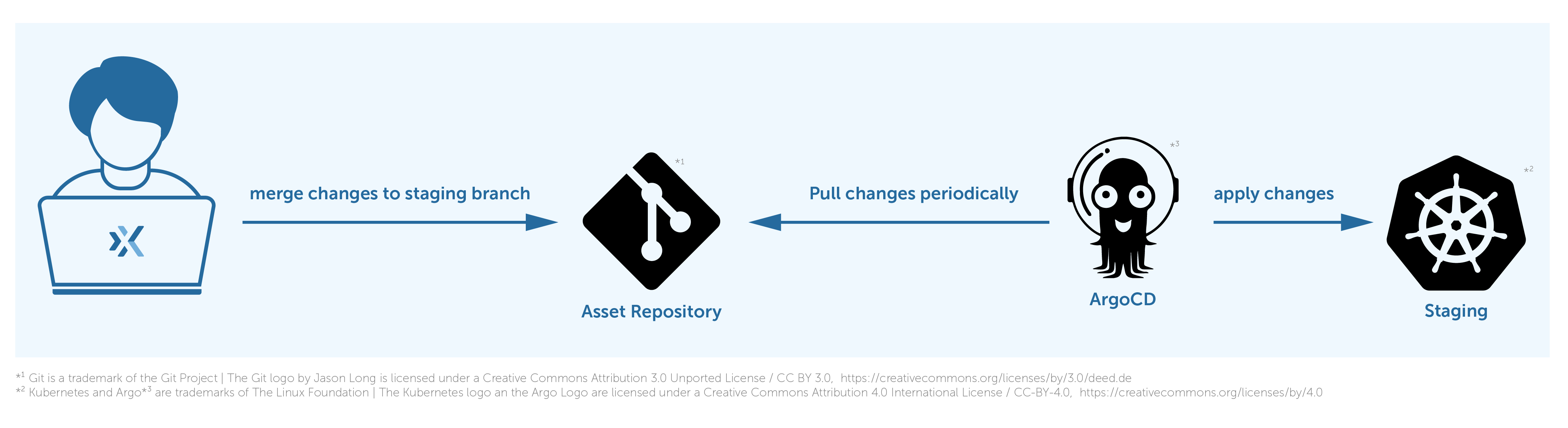

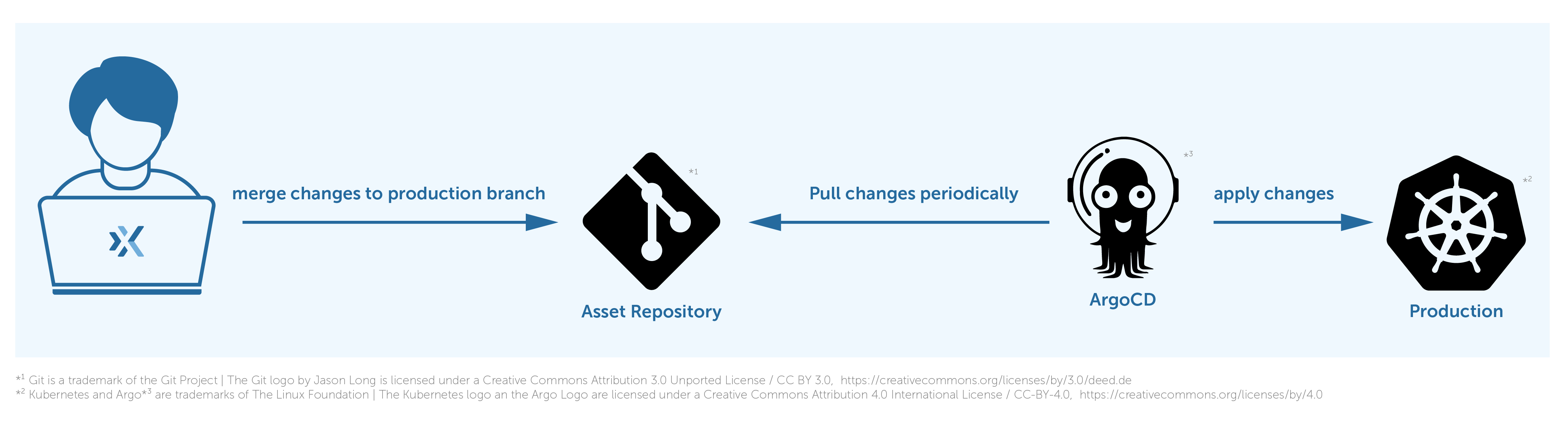

The following image shows how it works.

ArgoCD is periodically pulling the changes from the repository and deploying them to the proper destination.

For example, if we want to deploy changes to the production cluster, first we push changes to the staging branch in our repository, which triggers a deployment on the staging cluster.

And then we should cherry-pick those changes to the production branch in order to propagate them to the production cluster.

ArgoCD deploys the changes of the staging overlay from the staging branch to the staging Kubernetes cluster and the same for the production environment but changes of the production overlay from the production branch to the production Kubernetes cluster. We use multiple branches, staging and production, to separate the deployment stages and multiple overlays to define dedicated configurations for each environment.

There is still one thing left. We used to use git-crypt [8] to encrypt sensitive data before storing them in Git, but it is tricky if we want to use it besides ArgoCD. ArgoCD is un-opinionated about how to manage secrets. Therefore, we have decided to replace git-crypt with another secret management tool.

There are dozens of them available that can be used besides ArgoCD [9], but we have selected sealed-secret [10] since it is straightforward to use and designed to be a cloud-native secret management tool.

Image 1

Image 2

What a relief!

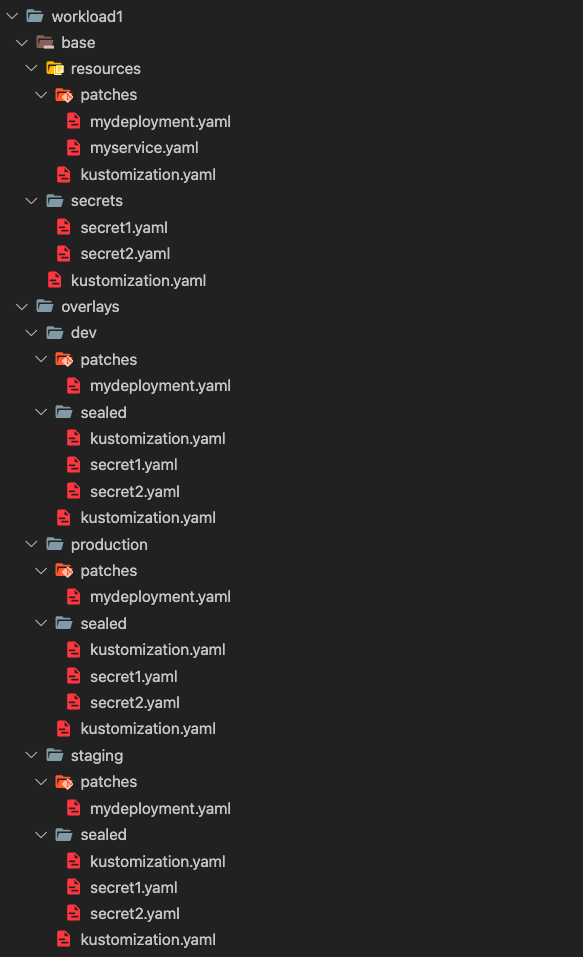

We were able to get rid of the troubles mentioned above by implementing GitOps in our setup, and it is working as expected. As mentioned, we have merged all the repositories into one repository. Here is how we are managing multiple environments in one repository.

For each workload, e.g., cert-manager, prometheus, grafana, etc., we have a dedicated directory. We are following the base-overlay [11] structure to enable a multi-environment with multi-configuration setup. The base [12] directory contains the shared code and secrets between all the environments. The overlays [13] directory contains one directory for each environment, and it includes dedicated configuration files and patches [14]. Find more information about Kustomize in [15]. The sealed directory contains secrets that have been encrypted via a sealed-secret controller. We can have patches for each overlay to customize some configurations. For instance: tuning the resources of a deployment. It is not necessary to give the same resources similar to the production environment to the dev or staging.

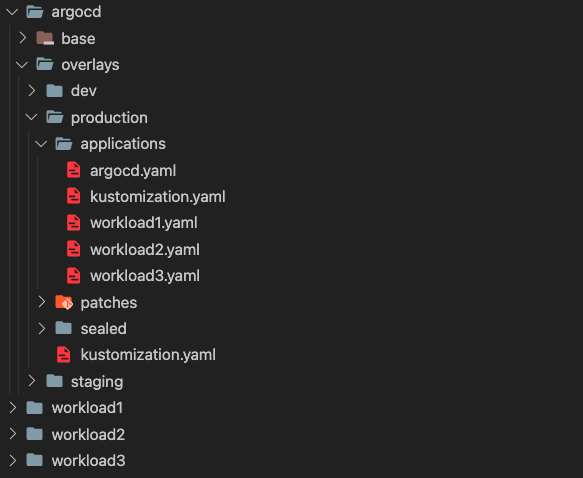

Finally, the main kustomization file of each overlay includes the base, sealed, and patches directories. We manage the ArgoCD workload like other workloads, and consider ArgoCD applications [16] as its resources.

As you can see in the image 2, all the applications are located in the applications directory.

In this case, those applications are being managed by the ArgoCD instance of the production environment.

Challenges in our setup

Besides all the advantages that this setup brings, there are some complexities that introduce some challenges for us as administrators and maintainers. Such as:

- A complex and maybe confusing directory structure. Especially if the number of workloads increases.

- Changes to a secret should be sealed before getting pushed to a repository; still we do it manually. Therefore, there is the possibility of optimization and removing this extra step by defining it within the CI pipeline.

- After adding a new workload, we should be aware of adding the appropriate ArgoCD application if we want ArgoCD to manage the lifecycle. This is not done automatically, yet.

- Writing a CI pipeline for this repository is not straightforward, because we should first detect which workload is changing and based on that do the proper action.

- Lack of knowledge about ArgoCD before rolling it out to the production environment can cause serious problems. E.g., by default when an application gets deleted, ArgoCD executes a cascade delete, thus if you do not know this there is a possibility that you break it, especially if you manage ArgoCD with ArgoCD. 🤯

- Changing the code in the repository, can take longer than before to get propagated to environments. Because you should first push the changes and merge the pull request or merge request and then wait for ArgoCD application controller to propagate the changes. This can bother especially during debugging.

Conclusion

We are leveraging ArgoCD and Kustomize to achieve a multi-environment continuous delivery and deployment that is always looking for new changes by polling the Git repository periodically and pushing the changes out to the destination Kubernetes clusters. The above-mentioned repository is accessible publicly through [17].

References

- https://www.weave.works/technologies/gitops/

- https://argo-cd.readthedocs.io/en/stable/

- https://kustomize.io/

- https://kubernetes.io/docs/reference/kubectl/overview/

- https://fluxcd.io/

- https://jenkins-x.io/

- https://helm.sh/

- https://github.com/AGWA/git-crypt

- https://argo-cd.readthedocs.io/en/stable/operator-manual/secret-management/

- https://github.com/bitnami-labs/sealed-secrets

- https://kubernetes.io/docs/tasks/manage-kubernetes-objects/kustomization/#bases-and-overlays

- https://kubectl.docs.kubernetes.io/references/kustomize/glossary/#base

- https://kubectl.docs.kubernetes.io/references/kustomize/glossary/#overlay

- https://kubectl.docs.kubernetes.io/references/kustomize/glossary/#patch

- https://kubectl.docs.kubernetes.io/references/kustomize/

- https://argo-cd.readthedocs.io/en/stable/operator-manual/declarative-setup/

- https://gitlab.x-ion.de/paas-public/gitops-example

Autor/Autorin: Ahmad Babaei Moghadam, Team PaaS

©x-ion GmbH

©x-ion GmbH ©x-ion GmbH

©x-ion GmbH